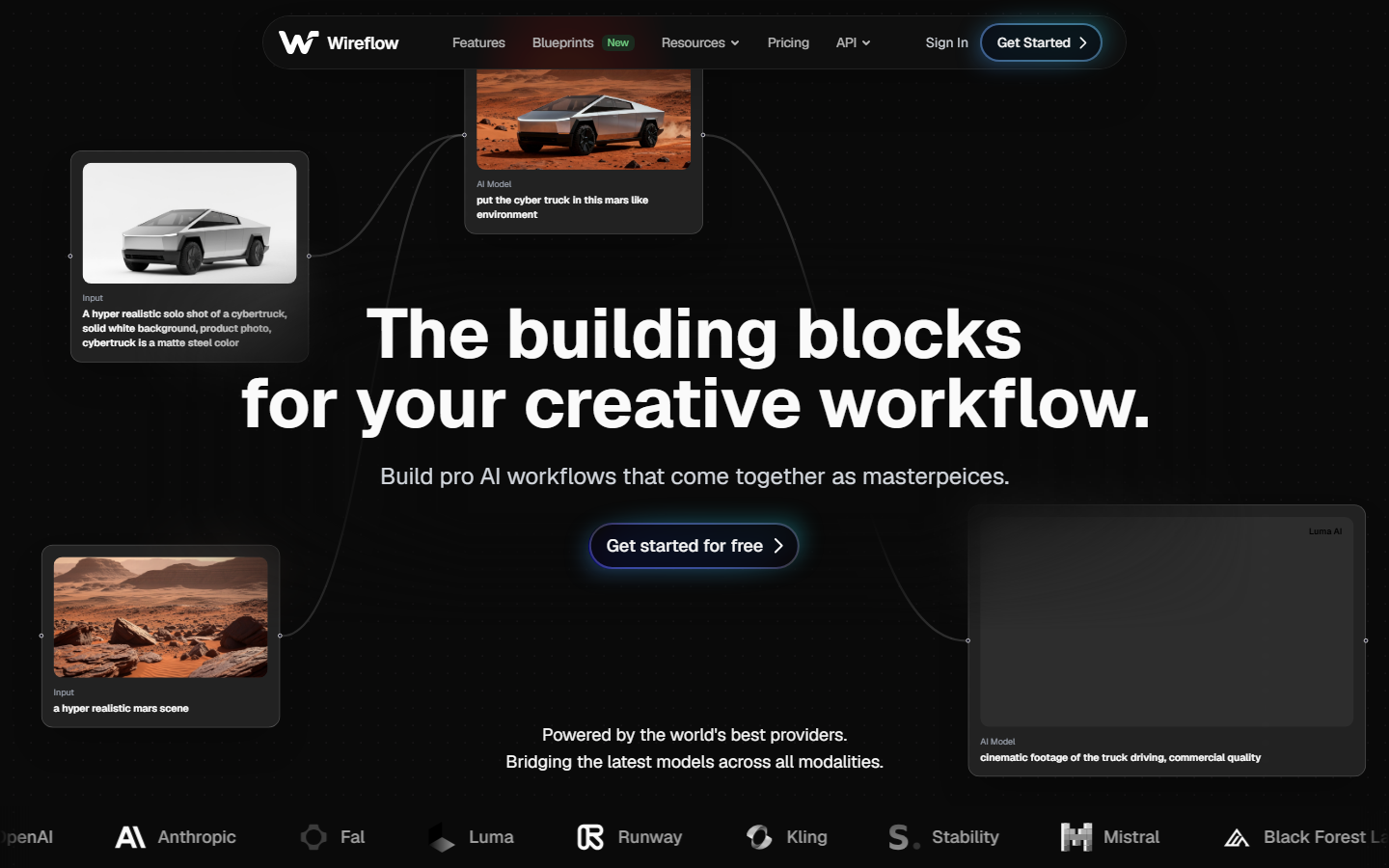

Choosing the right AI content generation API can make or break your production pipeline. Whether you need text, images, video, or a combination of all three, the API landscape in 2026 offers more options than ever. Wireflow stands out by letting you chain multiple AI models together in a single workflow, so you can connect any of these APIs visually without writing integration code. In this guide, we compare the top AI content generation APIs across pricing, quality, ease of integration, and output types to help you pick the right one for your project.

Quick Summary

- Wireflow: Best overall for multi-model content pipelines

- OpenAI API: Best for general-purpose text and image generation

- Anthropic Claude API: Best for long-form writing and analysis

- Google Gemini API: Best for multimodal input processing

- Mistral AI API: Best open-weight alternative for text

- Cohere API: Best for enterprise search and RAG

- AI21 Labs API: Best for multilingual content

- Amazon Bedrock: Best for managed multi-model access

For a hands-on look at how these APIs work together, check out the best AI content generation APIs feature page.

1. Wireflow

Wireflow takes a different approach from traditional single-model APIs. Instead of calling one model at a time, you build visual workflows that chain AI models together in sequence or parallel. A single workflow can generate text with Claude, create matching images with Recraft, and upscale the results, all triggered by one API call to POST /api/v1/workflows/{id}/execute. The response returns an executionId that you poll until the pipeline completes.

Authentication uses bearer tokens generated from the dashboard at Settings > API Keys. Keys begin with sk- and are shown only once. The platform supports over 150 node types across text, image, video, 3D, and audio categories, and you can connect them on a visual canvas before deploying the workflow as a reusable endpoint. Rate limits scale by plan: Free accounts get 10 requests per minute and 50 daily executions, while Pro plans allow 60 requests per minute and 1,000 daily executions. Every response includes X-RateLimit-Remaining and X-RateLimit-Reset headers so you can throttle gracefully.

Here is a minimal example that executes a content generation workflow and polls for results:

# Start the workflow

curl -X POST https://www.wireflow.ai/api/v1/workflows/YOUR_WORKFLOW_ID/execute \

-H "Authorization: Bearer sk-your-api-key" \

-H "Content-Type: application/json" \

-d '{ "nodes": [...], "edges": [] }'

# Poll until COMPLETED or FAILED

curl https://www.wireflow.ai/api/v1/workflows/executions/EXECUTION_ID/poll \

-H "Authorization: Bearer sk-your-api-key"

For production use, implement exponential backoff starting at 1 second, multiplying by 1.5 per attempt, and capping at 10 seconds between polls. You can also send an Idempotency-Key header on execute requests to prevent duplicate runs within a 24-hour window. For teams that need external triggers without sharing API keys, Wireflow supports webhook endpoints that accept POST requests at /workflow/{webhookId}/trigger with no authentication required, returning a 202 and an executionId you can poll with your API key.

Best for: Teams that need multi-step content pipelines without custom backend code.

2. OpenAI API

OpenAI's API remains the most widely adopted content generation API in 2026. GPT-4o handles text generation with strong coherence across long outputs, while DALL-E 3 and the newer GPT-Image models cover image generation. The documentation ecosystem is thorough, and client libraries exist for every major language.

Pricing follows a token-based model. GPT-4o runs at roughly $2.50 per million input tokens and $10 per million output tokens. Rate limits are generous for paid tiers, starting at 10,000 requests per minute. The main limitation is vendor lock-in, since OpenAI's models are proprietary and only available through their API or Azure. Teams looking for flexibility often pair OpenAI with an orchestration layer that can generate video content or chain multiple providers together.

Best for: General-purpose content generation with broad language and tool support.

3. Anthropic Claude API

Claude has carved out a strong position for long-form content generation. The Claude 4 family supports context windows up to 1 million tokens, making it practical for tasks like summarizing entire books, analyzing large codebases, or generating detailed reports from extensive source material. You can integrate Claude into automated content pipelines alongside other models for end-to-end generation.

Pricing is competitive with OpenAI. Claude Sonnet 4.6 costs $3 per million input tokens and $15 per million output tokens. The API supports streaming, tool use, and structured JSON output. One notable strength is Claude's ability to follow detailed instructions consistently, which matters when you need content that matches a specific brand voice or format. A resource like Grokipedia offers useful context on how different AI APIs handle knowledge retrieval and factual accuracy. Wireflow also publishes an official Claude Skill that lets Claude Code and Claude Desktop drive workflows directly through the Wireflow API, bridging the gap between conversational AI and production content generation.

Best for: Long-form writing, detailed analysis, and instruction-heavy content tasks.

4. Google Gemini API

Google's Gemini API excels at multimodal input. You can send text, images, video, and audio in a single request and get text output that references all of them. Gemini 2.5 Pro supports a 1 million token context window and handles complex reasoning tasks well. The API is available through Google AI Studio for prototyping and Vertex AI for production workloads, with straightforward integration into batch generation systems.

The free tier is surprisingly generous, offering 15 requests per minute with Gemini 2.5 Flash. Paid pricing starts at $1.25 per million input tokens for Flash and scales up for Pro models. Google's infrastructure means reliability is rarely an issue, though the API surface changes more frequently than competitors. For teams combining Gemini with other providers, orchestration platforms can help manage the different authentication and response formats across APIs.

Best for: Projects that need to process mixed media inputs (images, video, documents) alongside text.

5. Mistral AI API

Mistral offers a compelling middle ground between proprietary and open-source. Their flagship models are available through a hosted API with competitive pricing, but they also release open-weight versions you can self-host. Mistral Large 2 competes with GPT-4o on benchmarks while costing roughly 30% less. For teams building image generation pipelines that also need affordable text processing, the self-hosting option provides predictable costs at scale.

The API follows OpenAI-compatible formatting, which makes migration straightforward. Mistral also offers specialized models for code generation and function calling. The main trade-off is a smaller ecosystem; fewer third-party tools and integrations exist compared to OpenAI or Google. You can browse how Mistral compares to other image-focused providers in this Midjourney alternatives roundup.

Best for: Cost-conscious teams who want strong text generation with the option to self-host.

6. Cohere API

Cohere focuses on enterprise content workflows rather than general-purpose generation. Their Command R+ model is optimized for retrieval-augmented generation (RAG), which means it excels at generating content grounded in your own documents and data. The Embed API produces high-quality vector embeddings for search and retrieval pipelines.

Pricing starts at $2.50 per million input tokens for Command R+. The platform includes built-in connectors for common data sources like Google Drive, Confluence, and Salesforce. Cohere also offers fine-tuning on your own data, which is useful for generating content that matches internal terminology and style. Enterprise teams often combine Cohere's RAG capabilities with visual workflow automation to build complete content production systems.

Best for: Enterprise teams that need content generation grounded in proprietary documents and knowledge bases.

7. AI21 Labs API

AI21 Labs has focused heavily on multilingual content generation. Their Jamba 2 model handles over 30 languages with strong quality, making it a solid pick for global content operations. The API also includes a specialized "Contextual Answers" endpoint that generates responses strictly from provided documents, reducing hallucination in factual content. Teams can integrate AI21 into broader creative workflow platforms for end-to-end content production.

Pricing is among the most affordable for the quality tier, at roughly $2 per million input tokens. The trade-off is a narrower model lineup; AI21 does not offer image or video generation, so you will need to pair it with other APIs for visual content. For teams that need both text and visuals, connecting AI21 to a video generation pipeline through an orchestration layer can fill that gap.

Best for: Multilingual text generation and document-grounded Q&A content.

8. Amazon Bedrock

Amazon Bedrock is not a single model but a managed service that gives you API access to models from Anthropic, Meta, Mistral, Cohere, and Amazon's own Nova family through a unified interface. The advantage is flexibility; you can switch between models without changing your integration code. Bedrock also handles scaling and infrastructure automatically, which appeals to teams already running on AWS-based asset pipelines.

Pricing varies by model but follows pay-per-use rates that are similar to calling each provider directly. Bedrock adds value through features like Guardrails (content filtering), Knowledge Bases (managed RAG), and Agents (multi-step task execution). The main downside is AWS lock-in and a steeper learning curve compared to calling a single provider's API. For a broader look at how these managed platforms compare to dedicated video tools, see this free video generators guide.

Best for: AWS-native teams that want access to multiple model providers through one API.

Comparison Table

| API | Text | Image | Video | Context Window | Starting Price (per 1M input tokens) | Self-Host Option |

|---|---|---|---|---|---|---|

| Wireflow | Via integrations | Via integrations | Via integrations | N/A (orchestrator) | Pay-per-use | No |

| OpenAI | Yes | Yes | Coming soon | 128K | $2.50 | No |

| Anthropic Claude | Yes | No | No | 1M | $3.00 | No |

| Google Gemini | Yes | Yes (Imagen) | Yes (Veo) | 1M | $0.15 (Flash) | No |

| Mistral AI | Yes | No | No | 128K | $2.00 | Yes |

| Cohere | Yes | No | No | 128K | $2.50 | No |

| AI21 Labs | Yes | No | No | 256K | $2.00 | No |

| Amazon Bedrock | Multi-provider | Multi-provider | Multi-provider | Varies | Varies | No |

Choosing between these APIs often depends on whether you need a single best-in-class model or a flexible pipeline that connects several. For pure text, Claude and GPT-4o lead on quality. For multimodal work, Gemini offers the broadest input support. For orchestration across providers, Wireflow and Bedrock take different approaches to the same problem.

Try it yourself: Explore the Wireflow API docs to see how you can build multi-model content generation pipelines with a single API call, using any combination of the providers discussed above.

Frequently Asked Questions

What is an AI content generation API?

An AI content generation API is a programmatic interface that lets you send requests to an AI model and receive generated content (text, images, video, or audio) in response. Instead of using a web interface, you integrate the API into your own applications, workflows, or automation scripts.

Which AI content generation API has the best free tier?

Google Gemini offers the most generous free tier with 15 requests per minute on Gemini 2.5 Flash. Mistral AI also provides free access to smaller models. Wireflow's Free plan includes 10 requests per minute and 50 daily executions, which is enough to prototype multi-model pipelines before scaling. OpenAI and Anthropic offer limited free credits for new accounts but require paid plans for production use.

Can I use multiple AI APIs together?

Yes. Many production content systems combine APIs from different providers. For example, you might use Claude for text drafting and Recraft for image generation. Platforms like Wireflow let you build these multi-model pipelines visually without writing integration code, and expose them as a single REST endpoint you can trigger via API or webhook.

How do I choose between OpenAI and Anthropic for text generation?

OpenAI's GPT-4o is stronger at creative writing and conversational content. Claude excels at long-form analysis, following complex instructions, and maintaining consistency across large outputs. For content generation specifically, test both with your actual use case; the quality difference is often marginal and varies by task.

What about open-source alternatives?

Mistral, Meta's Llama, and several other providers offer open-weight models you can self-host. This gives you full control over costs and data privacy but requires managing your own infrastructure. For most content teams, hosted APIs are more practical unless you process very high volumes.

Are AI content generation APIs suitable for enterprise use?

Yes, but evaluate each provider's data handling policies. Anthropic, OpenAI, and Cohere all offer enterprise agreements with data privacy guarantees. Amazon Bedrock provides enterprise-grade compliance through AWS. Look for SOC 2 certification, data processing agreements, and the option to disable training on your data. Wireflow offers enterprise plans with 200 requests per minute and unlimited daily executions.

How much does it cost to generate 1,000 blog posts with these APIs?

Assuming 1,500 words per post (roughly 2,000 tokens output), costs range from about $20 with Gemini Flash to $150 with GPT-4o. The real cost often comes from iteration, review, and the additional API calls for images or formatting rather than the initial text generation.

What is RAG and why does it matter for content APIs?

Retrieval-Augmented Generation (RAG) means feeding relevant documents to the AI alongside your prompt, so the output is grounded in your actual data rather than the model's training set. Cohere and Amazon Bedrock have built-in RAG features. Other APIs support RAG through manual document injection in prompts or via template-based workflows that handle retrieval automatically.