Finding the right AI lip sync tool can make or break a multilingual video strategy. Whether you need to dub marketing content into dozens of languages or create talking-head videos from scratch, Wireflow lets you chain TTS, lip sync, and video models into a single automated pipeline. This guide covers the top eight platforms for AI-powered lip synchronization and dubbing in 2026, with honest comparisons on pricing, accuracy, and language support.

Quick Summary

- Wireflow - Best for automated lip sync pipelines with API access

- HeyGen - Best all-in-one AI video dubbing platform

- Synthesia - Best for enterprise L&D and training videos

- Sync Labs - Best API-first lip sync for developers

- ElevenLabs - Best voice quality for audio dubbing

- Rask AI - Best for collaborative localization teams

- Vozo AI - Best for multi-speaker precision lip sync

- Magic Hour - Best budget-friendly all-in-one option

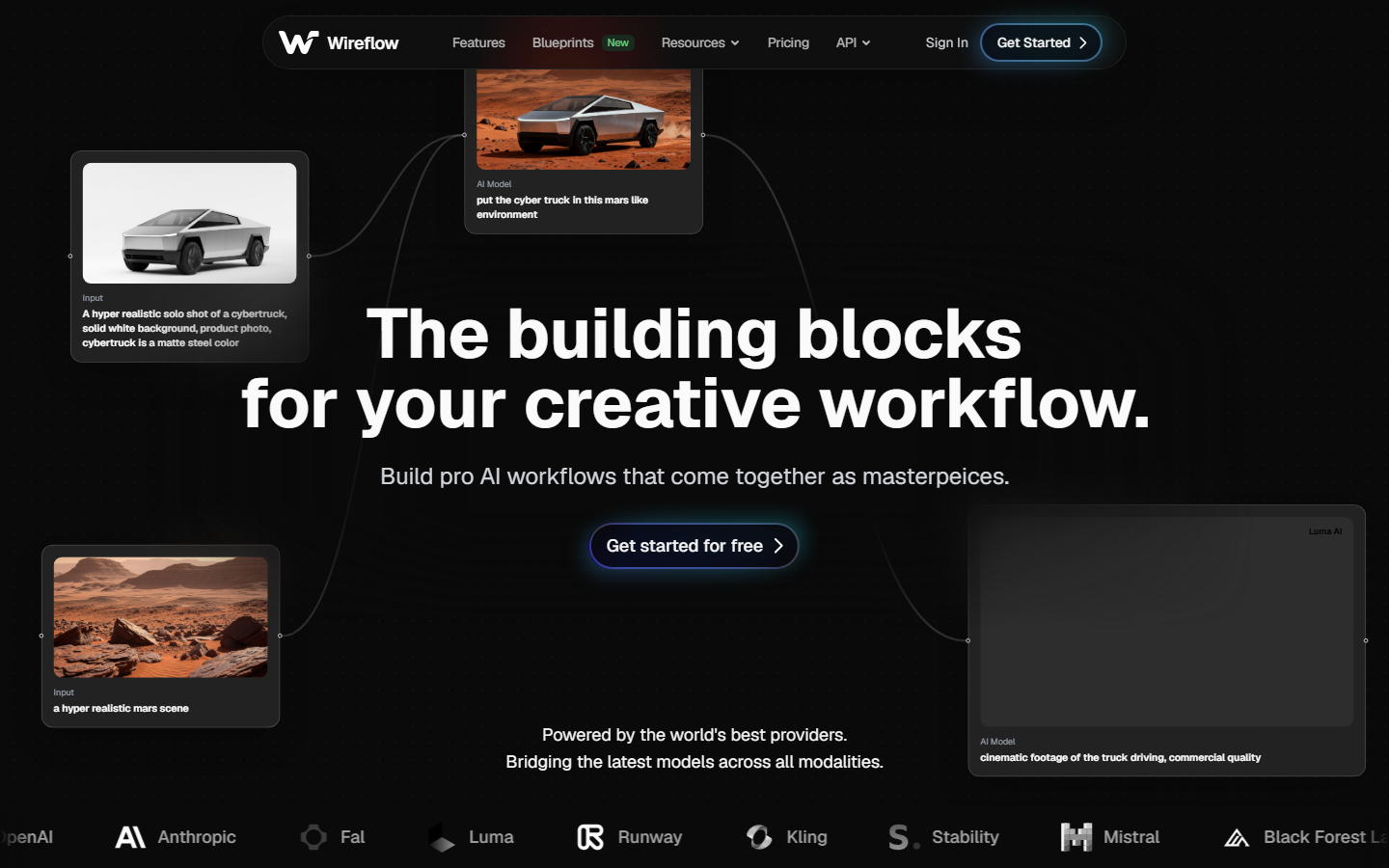

1. Wireflow

Wireflow takes a different approach to lip sync than standalone tools. Instead of uploading a video and waiting for a dubbed result, you build a visual workflow that connects text-to-speech nodes, lip sync models, and video outputs into a reusable pipeline. This means you can dub a single video into 20 languages by swapping one input node, not by re-uploading 20 times.

The platform supports Sync Labs, ElevenLabs, and other AI model chains natively. You drag nodes onto a canvas, connect them, and hit run. Every execution produces a shareable output with full API access for programmatic batch jobs.

Pricing: Free tier available; Pro starts at $29/month with API access.

Best for: Teams that need repeatable, scalable dubbing workflows rather than one-off translations.

2. HeyGen

HeyGen combines AI avatar generation with lip sync dubbing in a single platform. Its Video Translate feature takes existing footage, clones the speaker's voice, and re-renders mouth movements to match the translated audio. The platform claims 0.02-second facial sync accuracy, and in practice the results hold up well for clips under 15 minutes.

Language support covers 175+ languages, which is among the broadest in this category. HeyGen also offers AI avatar creation with custom digital presenters, making it useful for teams that produce both original and dubbed content.

Pricing: Free plan includes 3 videos/month. Creator plan at $24/month for unlimited videos.

Best for: Marketing teams that want avatar creation and dubbing in one tool.

3. Synthesia

Synthesia focuses on enterprise training and internal communications. Its dubbing engine preserves speaker identity across languages while adjusting lip movements to match translated scripts. The platform includes a full video editor, so L&D teams can create, translate, and distribute training materials without leaving the app.

Voice preservation is a standout feature. Synthesia captures the speaker's vocal characteristics and applies them consistently across all target languages. This matters for executive communications and branded content where voice consistency is non-negotiable. The platform also offers AI voiceover generation with 200+ pre-built voices as a fallback.

Pricing: Business plans start around $1,000/month. Starter plans available for smaller teams.

Best for: Enterprise L&D departments with budget for premium dubbing quality.

4. Sync Labs

Sync Labs is purpose-built for developers who need lip sync as an API. The platform offers both a web studio for quick jobs and a REST API for production integrations. Video input goes in, synced video comes out, with no extra features getting in the way.

The API supports both audio-driven and text-to-video lip sync modes. Audio-driven mode takes existing audio and syncs it to a face in the video. Text-driven mode generates speech and syncs it in one step. Latency sits around 2-4 seconds for short clips, making it viable for near-real-time applications.

Pricing: Pay-per-use API pricing. Free tier available for testing.

Best for: Developers building lip sync into their own products or workflow pipelines.

5. ElevenLabs

ElevenLabs started as a voice cloning platform and has expanded into full dubbing workflows. Its Dubbing Studio handles video translation with automatic transcription, translation, and voice cloning in a single pipeline. The voice quality is widely considered the best in the industry, with near-indistinguishable clones from just a few minutes of sample audio.

The platform supports 32 languages for dubbing with lip sync. While that is narrower than HeyGen or Rask AI, the output quality per language tends to be higher. ElevenLabs also offers a standalone voice cloning API that integrates well with external lip sync tools.

Pricing: Free tier with limited minutes. Creator plan at $22/month. Scale plan at $99/month.

Best for: Creators who prioritize voice realism above all else.

6. Rask AI

Rask AI is built for localization teams that manage high volumes of video content. The platform supports 135 languages and includes collaborative features like team workspaces, approval workflows, and glossary management. Lip sync is integrated into the translation pipeline, so dubbed videos come out with adjusted mouth movements by default.

What sets Rask AI apart is the editorial layer. Translators can review and edit transcriptions before dubbing, adjust timing, and flag segments that need human attention. This hybrid approach produces more accurate results than fully automated tools, especially for marketing videos with nuanced messaging.

Pricing: Starts at $49/month for individuals. Team plans available.

Best for: Localization teams managing multi-language video libraries.

7. Vozo AI

Vozo AI differentiates itself with multi-speaker support and dual processing modes. Standard mode prioritizes speed for quick turnarounds. Precision mode uses additional processing passes for higher accuracy on complex scenes with multiple speakers or rapid dialogue. The platform supports 200+ languages and provides both batch processing and API access.

The multi-speaker detection automatically identifies different speakers in a video and applies individual voice clones and lip sync adjustments to each one. This is particularly useful for interview-style content, panel discussions, and dialogue-heavy videos where single-speaker tools fall short.

Pricing: Free trial available. Paid plans start at $19/month.

Best for: Videos with multiple speakers or dialogue-heavy content.

8. Magic Hour

Magic Hour bundles lip sync with a broader set of AI video creation tools, including face swap, image-to-video conversion, and style transfer. The lip sync feature is simpler than dedicated tools like Sync Labs or Vozo AI, but it handles basic dubbing tasks at a fraction of the cost.

The platform works well for content creators who need occasional lip sync alongside other video effects. Upload a video, provide new audio or text, and Magic Hour generates a synced version. Quality is acceptable for social media content, though it may not meet broadcast standards for longer-form production.

Pricing: Free tier with watermarks. Pro at $9.99/month.

Best for: Budget-conscious creators who need lip sync plus other AI video tools.

Comparison Table

| Tool | Languages | Lip Sync Quality | API Access | Starting Price | Best For |

|---|---|---|---|---|---|

| Wireflow | Multi-model | Pipeline-dependent | Yes | Free / $29 | Automated workflows |

| HeyGen | 175+ | High | Yes | Free / $24 | All-in-one video |

| Synthesia | 120+ | High | Yes | ~$1,000 | Enterprise L&D |

| Sync Labs | 30+ | Very High | Yes (API-first) | Pay-per-use | Developers |

| ElevenLabs | 32 | Very High | Yes | Free / $22 | Voice quality |

| Rask AI | 135 | High | Yes | $49 | Localization teams |

| Vozo AI | 200+ | High | Yes | Free / $19 | Multi-speaker |

| Magic Hour | 20+ | Medium | No | Free / $9.99 | Budget creators |

How to Choose the Right Lip Sync Tool

Picking the right tool depends on three factors: volume, quality requirements, and integration needs.

For high-volume dubbing across many languages, Rask AI and HeyGen offer the best combination of language support and collaborative features. Both handle batch video generation workflows well.

For maximum voice and sync quality, ElevenLabs and Sync Labs lead the pack. ElevenLabs wins on voice realism while Sync Labs delivers the tightest lip synchronization through its dedicated API.

For developer integration, Sync Labs provides the most straightforward API. Teams that need to combine lip sync with other AI models (text-to-speech, image generation, video pipelines) benefit from a visual orchestration layer that connects these services into a single automated flow.

For budget-conscious creators, Magic Hour and Vozo AI offer solid lip sync at accessible price points, with Vozo AI providing better multi-speaker handling.

Try it yourself: Build this lip sync workflow in Wireflow - the nodes are pre-configured with ElevenLabs TTS and Sync Labs lip sync in the exact pipeline discussed above.

FAQ

What is AI lip sync?

AI lip sync uses machine learning to adjust mouth movements in a video so they match new audio or translated speech. The AI analyzes the original facial movements and re-renders them frame by frame to align with the target audio track.

How accurate is AI lip sync compared to manual dubbing?

Modern AI lip sync tools achieve 85-95% accuracy for most languages. Tonal languages (Mandarin, Vietnamese) and languages with very different mouth shapes from the source can see lower accuracy. Manual dubbing by professional studios still produces better results, but takes 10-50x longer and costs significantly more.

Can AI lip sync handle multiple speakers in one video?

Some tools can. Vozo AI and Rask AI both offer multi-speaker detection that identifies different faces and applies individual lip sync adjustments. Most other tools require you to process each speaker separately or limit lip sync to the primary speaker.

Is AI dubbing good enough for professional broadcast?

For social media, YouTube, and corporate training, AI dubbing quality is production-ready with tools like HeyGen, Synthesia, and ElevenLabs. For theatrical release or broadcast television, most studios still use AI as a first pass followed by human refinement.

How long does AI lip sync processing take?

Processing time varies by tool and video length. Short clips (under 2 minutes) typically process in 30-90 seconds. Longer videos (10-30 minutes) can take 5-15 minutes. API-based tools like Sync Labs tend to be faster than browser-based platforms.

Do AI lip sync tools preserve the original speaker's voice?

Most modern tools offer voice cloning that preserves the speaker's vocal characteristics across languages. ElevenLabs and HeyGen are particularly strong at this, requiring only a few minutes of sample audio to create a convincing voice clone.

What video formats do AI lip sync tools support?

MP4 is universally supported. Most tools also accept MOV, AVI, and WebM. Output is typically MP4 at the same resolution as the input. Some enterprise tools like Synthesia support 4K output.

Can I use AI lip sync tools via API?

Yes, several tools offer API access. Sync Labs is API-first and provides the most complete developer documentation. ElevenLabs, HeyGen, and Vozo AI also offer APIs. For teams that need to chain multiple APIs together, a visual workflow builder can simplify the integration.