ComfyUI is a powerful node-based tool for building AI image workflows, but it demands a beefy local GPU and hours of setup. If you want the same creative control without buying hardware, Wireflow and several other cloud platforms now offer full visual workflow builders that run entirely in the browser. This guide covers the seven best ComfyUI alternatives that require no local GPU in 2026, ranked by capability, ease of use, and value.

Quick Summary

- Wireflow - Best Overall (cloud canvas with multi-model chaining and full API)

- RunComfy - Best for ComfyUI Users (hosted ComfyUI with 200+ preloaded nodes)

- ThinkDiffusion - Best for Quick Setup (one-click cloud ComfyUI sessions)

- Floyo - Best for Beginners (simplified browser-based node editor)

- ComfyDeploy - Best for Deployment (turn ComfyUI workflows into serverless endpoints)

- fal.ai - Best for Developers (serverless inference API with fast cold starts)

- Replicate - Best for Model Variety (thousands of open-source models via API)

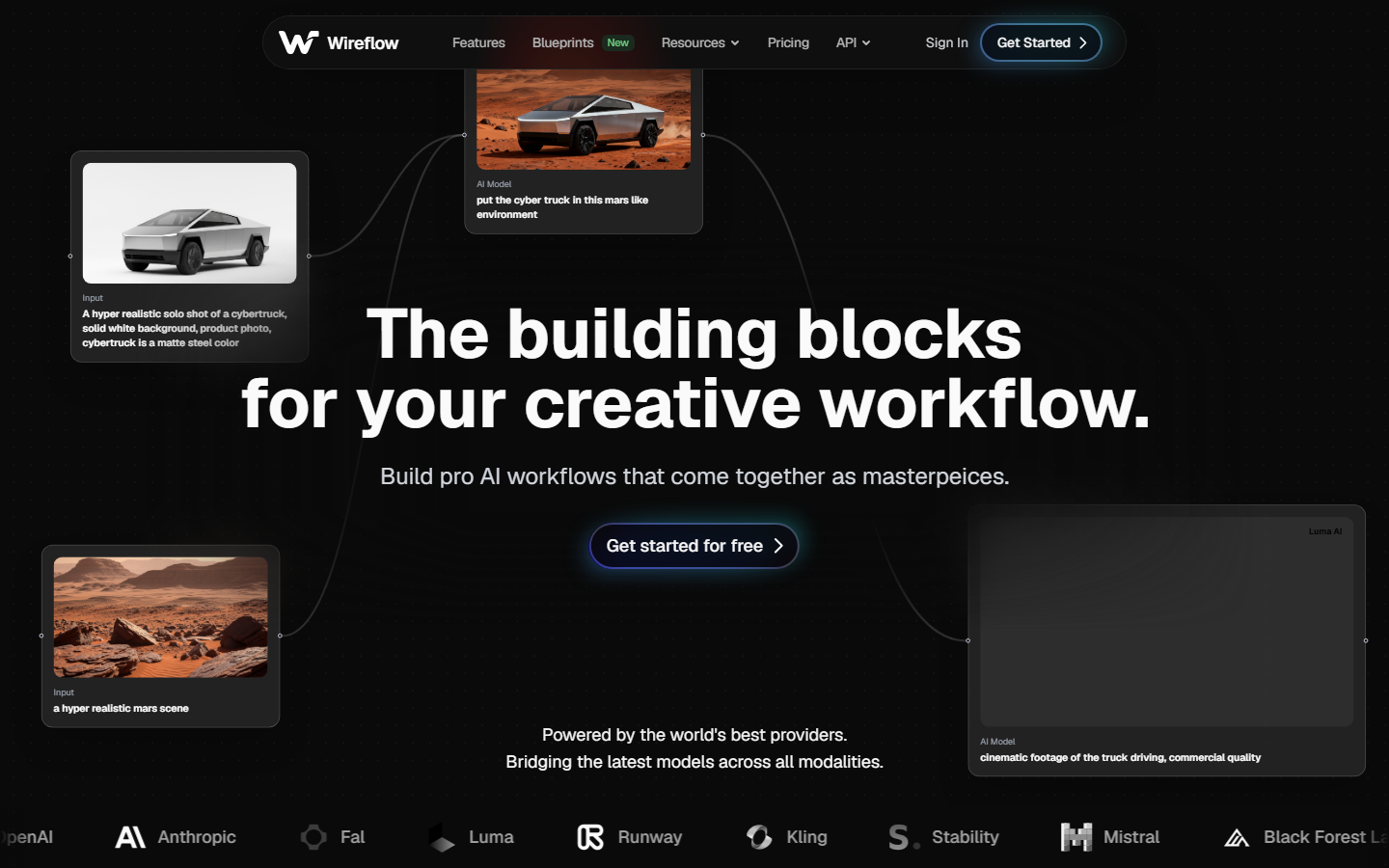

1. Wireflow

Wireflow is a cloud-native visual workflow builder that lets you chain multiple AI models on a drag-and-drop canvas. Unlike ComfyUI, which only supports Stable Diffusion variants, Wireflow connects to Flux Pro, DALL-E, Kling, and dozens more through a single interface. Every node runs on remote GPUs, so your laptop never breaks a sweat.

For a hands-on comparison of how Wireflow stacks up against ComfyUI, check out the ComfyUI alternative feature page.

Key strengths include the visual node editor that mirrors ComfyUI's graph layout, built-in image generation, video generation, upscaling, and background removal nodes, plus a REST API for running workflows programmatically. Pricing starts with a free tier and scales based on compute usage.

2. RunComfy

RunComfy gives you the actual ComfyUI interface running on cloud GPUs. If you already have ComfyUI workflows and custom nodes, RunComfy lets you upload and run them without any hardware changes. The platform comes with over 200 preloaded nodes and popular models like SDXL, Flux, and various LoRAs. Sessions spin up in under a minute, and you get a familiar node graph that works exactly like the local version.

Pricing is based on GPU time, with options ranging from T4 instances for basic work to A100 GPUs for heavy batch processing. The main trade-off is latency: each session requires a cold start, and you are still limited to models that run on Stable Diffusion architecture.

3. ThinkDiffusion

ThinkDiffusion offers one-click cloud sessions for both ComfyUI and Automatic1111. You pick a GPU tier, launch a session, and get a full ComfyUI environment in your browser within seconds. The service handles model downloads, extension management, and VRAM allocation automatically. ThinkDiffusion is a solid option for anyone who wants the exact ComfyUI experience but does not own a compatible GPU.

Plans are subscription-based with hourly credits, which can get expensive for heavy use. Custom node support is broad but not unlimited; some community extensions require manual installation through the terminal. For teams looking at hosted API access, ThinkDiffusion also provides an API endpoint to trigger workflows remotely.

4. Floyo

Floyo is a browser-based platform designed specifically for users who find ComfyUI's learning curve too steep. The interface simplifies node connections with color-coded ports and guided templates for common tasks like text-to-image, inpainting, and ControlNet workflows. All processing runs on cloud GPUs, and the platform supports SDXL, Hunyuan, and Flux models out of the box.

Floyo includes pre-built workflow templates that cover popular AI creative workflows such as image generation, video creation, and LoRA training. The free tier is limited to lower-resolution outputs, but paid plans unlock full resolution and batch processing. Compared to the cloud API tools in this space, Floyo focuses more on the visual builder experience than developer integration.

5. ComfyDeploy

ComfyDeploy takes a different approach: instead of giving you a cloud editor, it lets you package existing ComfyUI workflows into serverless API endpoints. You design your workflow locally (or import a JSON file), push it to ComfyDeploy, and get a REST API that anyone can call. This is ideal for developers who want to productionize ComfyUI workflows without managing GPU infrastructure.

The platform supports batch generation through queued API calls and integrates with CI/CD pipelines. Pricing is per-invocation, which keeps costs predictable for production workloads. The downside is that you still need a local ComfyUI installation for designing workflows; ComfyDeploy only handles the deployment and scaling side.

6. fal.ai

fal.ai is a serverless AI inference platform that provides fast API access to popular generative models. Rather than replicating ComfyUI's node graph, fal.ai exposes each model as a standalone API endpoint with sub-second cold starts. This makes it a strong choice for developers building applications that need AI pipeline automation without a visual editor.

The model library includes Flux, Stable Diffusion, LLaVA, Whisper, and others. You can chain multiple API calls in your own code to replicate what a ComfyUI workflow does, though you lose the visual feedback loop. Pricing is per-second of GPU compute, and the headless workflow approach keeps overhead minimal for high-volume production use cases.

7. Replicate

Replicate hosts thousands of open-source AI models behind a unified API. Any model published on the platform can be called with a single API request, from Flux and SDXL to video generators and audio models. For users who want ComfyUI's model variety without the local setup, Replicate offers the broadest catalog. The platform also supports model chaining through its prediction API, where the output of one model feeds into the next.

Pricing is pay-per-prediction based on the hardware each model runs on. Replicate recently added a web-based playground for testing models interactively, but it lacks the node-graph workflow builder that tools like n8n alternatives provide. For pure API-driven workflows, though, it remains one of the most flexible options.

Comparison Table

| Platform | Interface | GPU Required | ComfyUI Compatible | API Access | Free Tier | Best For |

|---|---|---|---|---|---|---|

| Wireflow | Visual canvas | No | Via import | Full REST API | Yes | Multi-model workflows |

| RunComfy | ComfyUI clone | No (cloud) | Full | Limited | Yes | Existing ComfyUI users |

| ThinkDiffusion | ComfyUI clone | No (cloud) | Full | Yes | No | Quick cloud sessions |

| Floyo | Simplified nodes | No (cloud) | Partial | No | Yes | Beginners |

| ComfyDeploy | Deploy-only | Local for design | Full | Full REST API | Yes | Production deployment |

| fal.ai | API-only | No | No | Full REST API | Yes | Developers, fast inference |

| Replicate | API + playground | No | No | Full REST API | Yes | Model variety |

What to Consider When Choosing

Selecting the right ComfyUI alternative depends on your workflow and technical background. If you have existing ComfyUI workflows you want to keep using, RunComfy or ThinkDiffusion will give you the smoothest transition since they run the actual ComfyUI interface. If you are starting fresh and want access to models beyond the Stable Diffusion ecosystem, a multi-model workflow builder like Wireflow or a broad API platform like Replicate will offer more flexibility.

For production use cases where you need to serve AI-generated content at scale, ComfyDeploy and fal.ai provide the infrastructure-level tools for building reliable AI pipelines. Cost is another factor: cloud GPU time adds up quickly for heavy users, so compare per-hour pricing against per-prediction pricing to find the model that fits your usage pattern.

Try it yourself: Build this workflow in Wireflow - the nodes are pre-configured with a text-to-image plus upscale pipeline that runs entirely in the cloud, no GPU needed.

Frequently Asked Questions

Can I run ComfyUI without a GPU?

ComfyUI can technically run on CPU, but generation times stretch from seconds to several minutes per image. Cloud alternatives like RunComfy and ThinkDiffusion give you the full ComfyUI interface on remote GPUs, so you get fast results without local hardware.

Are cloud ComfyUI alternatives more expensive than buying a GPU?

It depends on usage volume. A mid-range GPU costs $400-800 upfront, while cloud platforms charge $0.10-0.50 per generation or $0.50-2.00 per GPU hour. Casual users typically save money with cloud tools, while heavy daily users may break even within 3-6 months of GPU ownership.

Can I use my existing ComfyUI workflows on these platforms?

RunComfy, ThinkDiffusion, and ComfyDeploy support direct ComfyUI workflow imports. Wireflow uses its own node format but covers the same model types. fal.ai and Replicate require you to rebuild workflows as API call chains.

Which alternative is best for beginners?

Floyo offers the simplest onboarding with guided templates and color-coded node connections. Wireflow is also beginner-friendly with its drag-and-drop canvas while offering more advanced capabilities for growth.

Do these platforms support Flux and SDXL models?

Yes. All seven platforms listed here support at least one Flux variant and SDXL. Wireflow and Replicate offer the widest model selection, including models from providers outside the Stable Diffusion ecosystem.

Can I build video generation workflows without a GPU?

Several of these platforms support video models. Wireflow includes Kling, Seedance, and other video generation nodes on its canvas. Replicate hosts Stable Video Diffusion, CogVideo, and similar models via API.

Is there a fully free ComfyUI alternative?

Most platforms offer limited free tiers. Wireflow, RunComfy, Floyo, and Replicate all provide free credits or free-tier access. For unlimited free usage, ComfyUI on CPU remains an option, though performance will be significantly slower.

How do cloud alternatives handle custom models and LoRAs?

RunComfy and ThinkDiffusion support custom LoRA uploads directly. ComfyDeploy packages custom models alongside your workflow. Replicate lets you push any model to the platform. Wireflow supports custom model integration through its node system.

Conclusion

Running AI image and video workflows no longer requires a local GPU. Whether you need the exact ComfyUI interface in the cloud, a simplified visual builder, or a pure API approach, the platforms above cover every use case. Wireflow stands out for users who want a visual canvas with access to models from multiple providers, while RunComfy and ThinkDiffusion serve those who prefer the familiar ComfyUI environment. Evaluate your workflow complexity, model requirements, and budget to pick the tool that fits best.