Runway's Gen-3 Alpha model pushed AI video generation forward, but its API remains gated behind enterprise contracts, and per-second pricing adds up fast for production workloads. If you need programmatic access to video and image models without vendor lock-in, several platforms now offer open REST APIs with pay-per-request billing. Wireflow takes this further by letting you chain multiple AI models into visual workflows that expose a single API endpoint, so you get multi-model orchestration without writing glue code.

Quick summary:

- Wireflow: Multi-model workflow API with visual editor (Best Overall)

- Replicate: Model marketplace with per-second billing (Best for Model Variety)

- Fal.ai: Serverless inference with fast cold starts (Best for Speed)

- Luma AI: Dream Machine API for spatial video (Best for 3D/Spatial)

- Pika: Affordable text-to-video API (Best Budget Option)

- ComfyDeploy: ComfyUI workflows as API endpoints (Best for ComfyUI Users)

- Stability AI: Open-weight video diffusion models (Best Open Source)

- RunPod: Serverless GPU endpoints for custom models (Best for Custom Deployments)

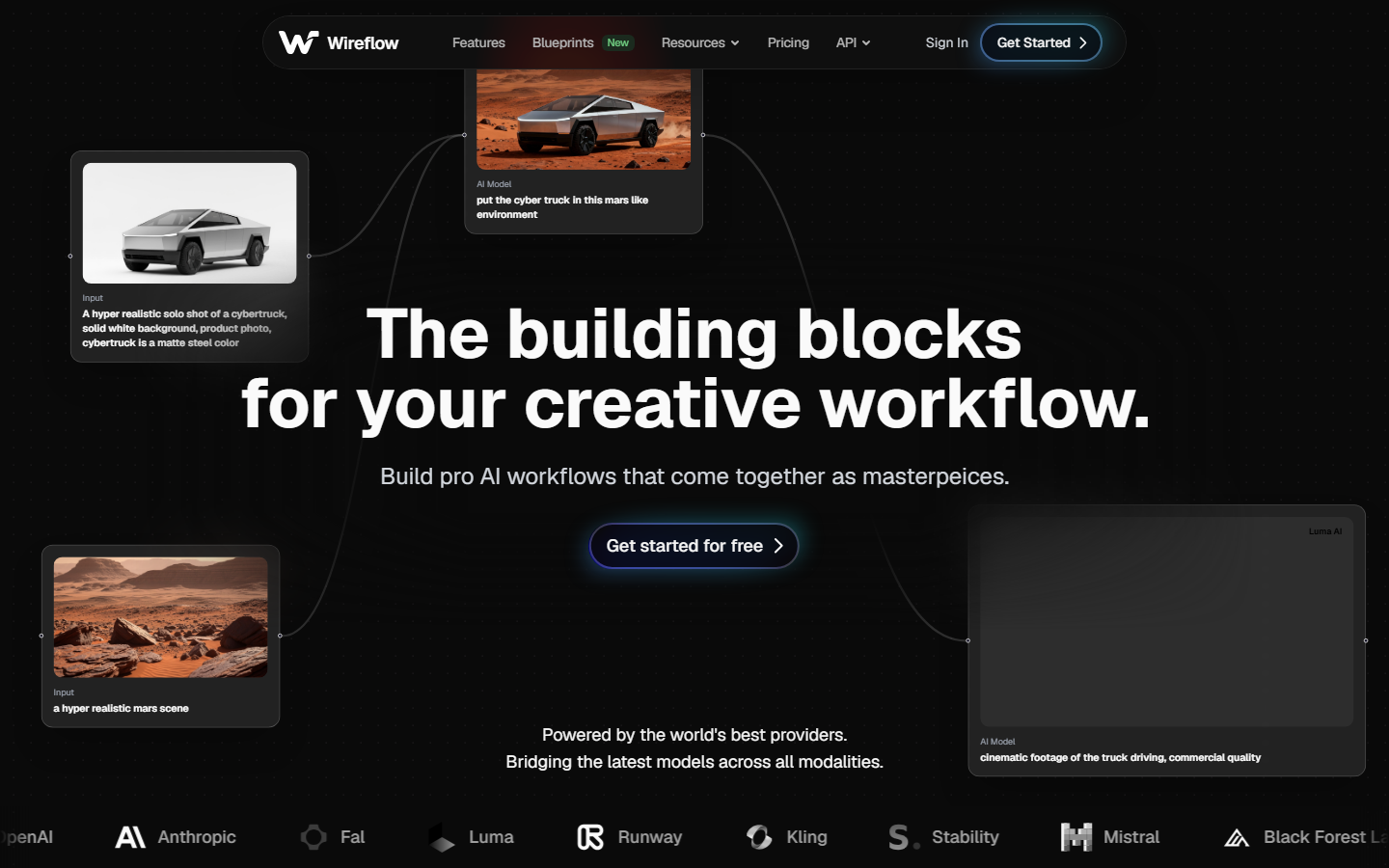

1. Wireflow

Wireflow is not just another single-model API. It gives you a visual node-based canvas where you connect text, image, and video generation models into reusable workflows. Each published workflow becomes an API endpoint you can call with a single POST request. The platform supports 157+ node types across Flux 2, Kling 2.5, Imagen 4, Nano Banana, and more. Rate limits scale by plan from 10 requests/minute on Free to 200/minute on Enterprise. The async execution pattern (start, poll, retrieve) works with any HTTP client since there is no SDK requirement. You can also trigger workflows via webhooks without authentication, which makes integration with CI/CD tools and Zapier straightforward.

For a deeper look at how the API handles multi-model execution, check out the Wireflow workflow API page, which covers endpoints, authentication, and code examples.

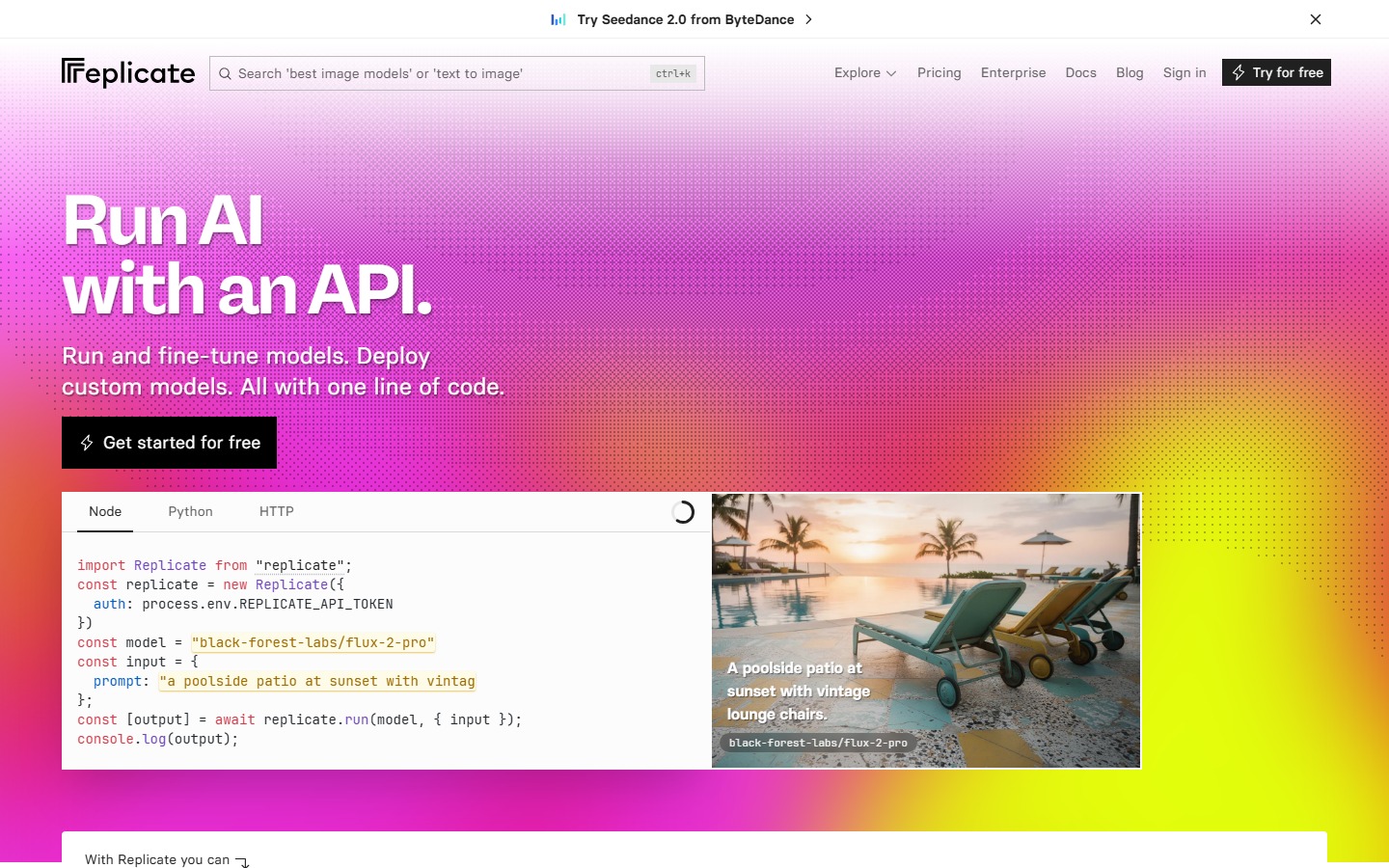

2. Replicate

Replicate hosts thousands of open-source models behind a unified API. You push a model to their infrastructure, and they handle scaling, cold starts, and GPU allocation. For video generation, you can run models like Stable Video Diffusion, AnimateDiff, and community fine-tunes through the same POST /v1/predictions endpoint. Billing is per-second of GPU time rather than per-generation, which means costs stay predictable for batch workloads. The trade-off is that cold starts can take 10-30 seconds for less popular models, and you are limited to models that have been packaged in their Cog container format. If you need to chain multiple models together, you will need to handle the orchestration logic yourself.

3. Fal.ai

Fal.ai focuses on inference speed. Their serverless architecture keeps warm instances for popular models, which means cold starts often land under 2 seconds. They host Kling, LTXV, Flux, and other video models behind a simple REST API. The queue-based system lets you submit a generation request and poll for results, similar to Runway's approach but without the enterprise contract. Pricing is per-request with transparent credit costs per model. Fal is a strong choice if latency matters for your application, such as real-time AI video generation via API. The downside is that model selection is curated rather than open, so you cannot bring your own custom checkpoints.

4. Luma AI

Luma AI's Dream Machine model excels at spatial understanding, producing videos with consistent depth, perspective, and camera motion. Their API is straightforward: submit a text or image prompt, receive a video URL. Luma stands out for scenes that require realistic 3D movement, such as product turntables, architectural walkthroughs, or character motion. The API supports both text-to-video and image-to-video modes. Pricing is credit-based with plans starting at $29/month. If your use case involves producing AI video content that needs to feel physically grounded rather than stylized, Luma is worth evaluating.

5. Pika

Pika offers one of the most accessible entry points for AI video generation via API. Plans start at $10/month, and the API supports text-to-video, image-to-video, and video extension. Their model handles stylized and creative content well, with controls for motion intensity, aspect ratio, and negative prompts. The API response times are competitive, typically returning results in 30-60 seconds for 4-second clips. Pika also supports lip sync and sound effects. For teams building a video pipeline on a budget, Pika's pricing makes it practical to prototype and iterate without burning through credits.

6. ComfyDeploy

ComfyDeploy takes ComfyUI workflows and turns them into production API endpoints. If you have already built complex generation pipelines in ComfyUI with custom nodes, ControlNet, and IP-Adapter setups, ComfyDeploy lets you expose those exact workflows as REST APIs without rewriting anything. The platform handles GPU provisioning and auto-scaling. This is the strongest option for teams that are already invested in the ComfyUI ecosystem and want API access without managing their own infrastructure. For those comparing this approach with managed platforms, see our ComfyUI alternative comparison. The limitation is that you are still responsible for workflow design and debugging within ComfyUI itself.

7. Stability AI

Stability AI offers API access to Stable Video Diffusion and their image generation models through their developer platform. The open-weight approach means you can also self-host these models if you prefer full control over your infrastructure. Their API follows standard REST conventions with API key authentication and supports both synchronous and asynchronous generation. For teams that need batch generation at scale, self-hosting Stability models on your own GPUs can be more cost-effective than per-request pricing from hosted providers. The trade-off is that self-hosted setups require DevOps expertise and ongoing maintenance.

8. RunPod

RunPod provides serverless GPU endpoints where you deploy any containerized model and get an auto-scaling API. This is the most flexible option if you have custom fine-tuned video models or need specific GPU configurations. You bring your own Docker container, RunPod handles the infrastructure, scaling, and endpoint management. Pricing is per-second of GPU compute with no minimum commitment. This suits teams that have outgrown managed APIs and want infrastructure-level control. For context on choosing between managed and self-hosted approaches, see our guide to headless AI workflow platforms.

Feature Comparison

| Platform | API Access | Video Models | Pricing Model | Multi-Model Chaining | Cold Start |

|---|---|---|---|---|---|

| Wireflow | REST API + Webhooks | Kling 2.5, Flux 2, Imagen 4, 157+ nodes | Per-credit, plans from Free | Yes, visual canvas | Warm |

| Replicate | REST API | 1000+ community models | Per-second GPU | Manual (code) | 10-30s |

| Fal.ai | REST API | Kling, LTXV, Flux | Per-request credits | No | Under 2s |

| Luma AI | REST API | Dream Machine | Per-credit, from $29/mo | No | Warm |

| Pika | REST API | Pika proprietary | Per-credit, from $10/mo | No | Moderate |

| ComfyDeploy | REST API | Any ComfyUI model | Per-second GPU | Yes (ComfyUI graphs) | Variable |

| Stability AI | REST API + Self-host | SVD, SDXL | Per-request or self-host | Manual (code) | Variable |

| RunPod | REST API | Any containerized model | Per-second GPU | Manual (code) | Configurable |

The choice comes down to what you value most: breadth of models (Replicate), speed (Fal.ai), budget (Pika), or the ability to chain multiple models into a single API call without writing orchestration code. For current plan details and credit costs, check each platform's pricing page directly.

Try it yourself: Wireflow lets you build multi-model workflows visually and call them through one REST endpoint. The API documentation at wireflow.ai/docs covers how to create, execute, and poll workflows programmatically.

Frequently Asked Questions

What is the main limitation of Runway's API?

Runway's API is not publicly available to individual developers. Access requires an enterprise sales conversation, and pricing is not listed on their website. This makes it difficult for smaller teams or indie developers to integrate AI video generation into their applications.

Can I switch between video models without changing my code?

Platforms like Wireflow and Replicate abstract the model layer behind a unified API. On Wireflow, you swap models by changing a node on the visual canvas without touching your API integration code. Replicate uses model versioning to achieve something similar.

Which alternative is cheapest for low-volume use?

Pika starts at $10/month and offers a generous free tier. Wireflow's free plan includes 50 daily executions with API access. Replicate charges per-second of compute with no monthly minimum, so costs scale to near-zero at low volumes.

Do these APIs support image-to-video generation?

Yes. Wireflow (via Kling 2.5 nodes), Fal.ai, Luma AI, and Pika all support image-to-video. You upload a source image and get back a video that animates it. Replicate supports it through specific models like Stable Video Diffusion. See our guide on animating still images with AI for step-by-step instructions.

Can I self-host any of these models?

Stability AI's models are open-weight and can be self-hosted. RunPod gives you GPU infrastructure to deploy any containerized model. Replicate's Cog format is also open-source, so you can run models locally. Wireflow, Luma, Pika, and Fal.ai are hosted-only services.

What is the typical API response time for video generation?

Most platforms take 30-90 seconds for a 4-second video clip. Fal.ai tends to be the fastest due to warm instances. All platforms use an async pattern where you submit a job and poll for completion rather than waiting on a synchronous request. Check workflow templates for pre-built pipelines that handle polling automatically.

How do webhooks work for these video APIs?

Wireflow supports webhook triggers that start workflow execution without requiring an API key, making it simple to integrate with external services. Other platforms like Replicate support webhook callbacks that notify your server when a prediction completes, so you do not need to poll.

Which platform is best for production applications?

For production use, look at rate limits, uptime SLAs, and error handling. Wireflow offers up to 200 requests/minute on Enterprise with idempotency keys to prevent duplicate executions. Replicate and Fal.ai both provide production tiers with guaranteed capacity. RunPod gives the most infrastructure control but requires more operational overhead.